Feature Engineering for Analysts: Unlocking Data’s Potential

This article explores the essentials of feature engineering, key techniques, tools, best practices, and emerging trends as of 2025, tailored for data analysts.

In the fast-evolving world of data analysis, transforming raw data into actionable insights is a core skill. Feature engineering, the process of creating or refining data features, is a powerful technique that enhances the performance of machine learning models and deepens analytical insights. For data analysts, mastering feature engineering bridges the gap between raw datasets and meaningful outcomes, whether for predictive modeling or exploratory analysis.

Introduction to Feature Engineering

Feature engineering involves using domain knowledge to create new features or modify existing ones to improve the performance of machine learning algorithms or enhance data analysis. It encompasses tasks like handling missing values, encoding categorical variables, scaling numerical features, creating interaction terms, and selecting the most relevant features. For data analysts, feature engineering is not just about preparing data for models but also about crafting variables that reveal hidden patterns during exploratory data analysis (EDA).

The importance of feature engineering lies in its ability to make data more suitable for analysis. Well-engineered features can improve model accuracy, reduce training time, and make results more interpretable. For example, in a retail dataset, creating a feature like “average purchase value per customer” can highlight spending patterns, aiding both modeling and business insights.

Why Feature Engineering Matters for Analysts

Data analysts often focus on exploring data, generating reports, and providing insights, but feature engineering plays a critical role in these tasks. By transforming raw data into meaningful features, analysts can uncover trends, improve visualizations, and prepare data for advanced analytics or machine learning. For instance, in marketing, deriving features like “days since last purchase” can reveal customer engagement patterns.

Moreover, feature engineering allows analysts to incorporate domain expertise, making their analyses more relevant. In smaller teams, where analysts may take on data science tasks, feature engineering becomes even more essential for building effective models. Even without modeling, well-crafted features enhance the clarity of dashboards and reports, making insights more actionable.

Key Feature Engineering Techniques

Below are the core techniques that data analysts can use to transform their data, with practical examples and considerations.

1. Handling Missing Values

Missing data is a common challenge in real-world datasets. Analysts can address it through:

Imputation: Replace missing values with statistical measures like mean, median, or mode. For numerical data, median imputation is robust to outliers. For categorical data, mode imputation is common. Advanced methods, like K-Nearest Neighbors (KNN) imputation, use similar records to estimate missing values.

Deletion: Remove rows or columns with missing values if the missing data is minimal (e.g., less than 10% of the dataset). This is less ideal for small datasets, as it reduces sample size.

Example: In a customer dataset, missing “age” values could be imputed with the median age to maintain data integrity.

2. Encoding Categorical Variables

Machine learning models require numerical inputs, so categorical variables (e.g., “color” with values “red,” “blue,” “green”) must be converted. Common methods include:

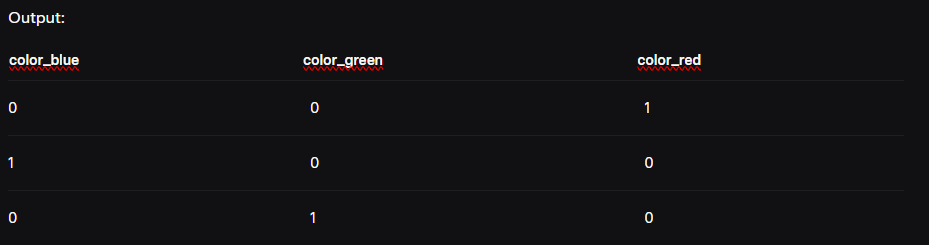

One-Hot Encoding: Creates binary columns for each category. For example, “color” becomes three columns:

color_red,color_blue,color_green.Label Encoding: Assigns numerical labels (e.g., red=1, blue=2, green=3), suitable for ordinal data.

Target Encoding: Replaces categories with the mean of the target variable, useful for high-cardinality features (e.g., zip codes).

Python Example (One-Hot Encoding with Pandas):

import pandas as pd

df = pd.DataFrame({'color': ['red', 'blue', 'green']})

df_encoded = pd.get_dummies(df, columns=['color'])

3. Feature Scaling

Feature scaling ensures numerical features are on comparable scales, which is critical for algorithms like linear regression or neural networks. Common methods are:

Normalization: Scales features to a range of 0 to 1, ideal for bounded data.

Standardization: Transforms features to have a mean of 0 and a standard deviation of 1, suitable for normally distributed data.

Example: Standardizing “house size” in square feet ensures it doesn’t dominate smaller-scale features like “number of bedrooms.”

4. Creating New Features

Deriving new features can capture complex relationships. Techniques include:

Interaction Terms: Combine features (e.g., multiply “length” and “width” to create “area”).

Polynomial Features: Generate higher-order terms (e.g.,

x^2,x*y) to model non-linear relationships.Domain-Specific Features: Use expert knowledge, such as calculating “customer lifetime value” in marketing.

Example: In a house price dataset, create “house age” by subtracting “year built” from the current year.

5. Feature Selection

Feature selection reduces dimensionality by choosing the most relevant features, improving model performance and reducing overfitting. Methods include:

Filter Methods: Use statistical tests like correlation analysis or chi-square tests.

Wrapper Methods: Employ algorithms like recursive feature elimination (RFE).

Embedded Methods: Use models like LASSO regression that inherently select features.

Example: In a sales dataset, correlation analysis might reveal that “store size” is highly correlated with sales, making it a key feature.

Advanced Feature Engineering Techniques

For analysts seeking to go beyond the basics, advanced techniques can provide deeper insights:

Target Encoding: Replaces high-cardinality categorical features with target variable means, using smoothing to prevent overfitting.

Polynomial Feature Generation: Creates polynomial combinations to capture non-linear patterns, guided by domain knowledge or statistical tests.

Time-Based Feature Extraction: For time-series data, derive features like lag values or rolling averages to capture temporal trends.

Embedding Representations: Uses neural network embeddings to map categorical variables into continuous spaces, common in recommendation systems.

These techniques require careful application to avoid overfitting and should be validated with cross-validation.

Tools for Feature Engineering

Analysts have access to a range of tools to streamline feature engineering:

Programming Libraries:

Pandas for data manipulation and transformation.

Scikit-Learn for encoding, scaling, and feature selection.

No-Code Platforms:

Automated Feature Engineering:

Featuretools automates feature creation for relational data.

TSFresh generates features for time-series data.

Autofeat creates synthetic features using machine learning.

Best Practices for Effective Feature Engineering

To maximize the impact of feature engineering, analysts should follow these best practices:

Conduct Thorough EDA: Use tools like histograms, box plots, and correlation heatmaps to understand data distributions and relationships. Resources like DataCamp’s EDA tutorial can guide this process.

Avoid Data Leakage: Apply feature transformations only to training data and consistently to test data to prevent biased results.

Validate Impact: Use cross-validation to measure how features affect model performance or analytical outcomes.

Leverage Domain Knowledge: Incorporate business or industry insights to create meaningful features, such as financial ratios in finance datasets.

Iterate and Experiment: Test different feature combinations and validate their impact to find the optimal set.

Real-World Example: House Price Prediction

Consider a house price prediction dataset with features like “number of bedrooms,” “square footage,” “location,” and “year built.” Applying feature engineering can significantly improve model performance:

Handling Missing Values: Impute missing “number of bedrooms” with the median to avoid bias from outliers.

Encoding Categorical Variables: Use one-hot encoding for “location” to create binary columns for each city.

Creating New Features: Calculate “house age” by subtracting “year built” from 2025.

Feature Scaling: Standardize “square footage” to align with other numerical features.

Feature Selection: Use correlation analysis to identify key predictors like “square footage” and “house age.”

In practice, these steps could improve a model’s R-squared value from 0.65 to 0.85, demonstrating the power of feature engineering.

Emerging Trends in Feature Engineering

As of 2025, advancements in machine learning are reshaping feature engineering. Models like graph transformers, such as the Graph Generative Pre-trained Transformer, can learn representations directly from graph-structured data, reducing the need for manual feature engineering in domains like social networks or molecular design. Automated tools like Featuretools and TSFresh are also gaining traction, enabling analysts to generate features efficiently. An X post by @jure highlights how pretrained graph transformers can tackle predictive tasks without extensive feature engineering, suggesting a shift toward model-driven feature extraction.

However, for most tabular datasets, manual feature engineering remains critical, especially for analysts working with structured data in industries like finance, retail, or healthcare.

Challenges and Considerations

Feature engineering is not without challenges. Over-engineering features can lead to overfitting, where models perform well on training data but poorly on new data. Additionally, complex transformations may reduce interpretability, which is critical for stakeholder communication. Analysts should balance complexity with simplicity and validate all transformations rigorously.

Conclusion

Feature engineering is a vital skill for data analysts, enabling them to transform raw data into powerful insights. By mastering techniques like handling missing values, encoding categorical variables, scaling features, creating new variables, and selecting the most relevant ones, analysts can enhance their analyses and support advanced modeling. Tools like Pandas, Alteryx, and automated libraries make these tasks accessible, while emerging trends like graph transformers hint at a future with less manual effort. As data analysis evolves in 2025, experimenting with feature engineering and staying updated on new methods will keep analysts at the forefront of their field.

Connect with me on LinkedIn